JINR develops platform for plant disease detection

News, 18 April 2023

Employees of the Laboratory of Information Technologies JINR have developed an online platform pdd.jinr.ru to detect diseases of indoor and agricultural plants. To solve the task, they used convolutional neural networks widely used for image classification. The neural network architecture of the platform can detect various diseases and pests with an accuracy of more than 95%. At the beginning of 2023, specialists increased the number of classes of the general model of pdd.jinr.ru up to 60. They also developed a couple of specific models for such popular indoor plants as dracaena and spathiphyllum.

According to estimates compiled by the Food and Agriculture Organization (FAO) of the United Nations, up to a third of the world’s crops annually die from pests and diseases. Therefore, specialists are currently working on automation of plant disease detection all over the world. In 2017, a team of researchers of the Laboratory of Information Technologies JINR won a grant of the Russian Foundation for Basic Research (RFBR) to develop a comprehensive system for detecting plant diseases based on images and text descriptions.

The team has designed a platform that they continue developing up to the present day. It has several user entry points and implements a collection of models. Processing a user request, the algorithm first uses a general model for diseases and pests. Afterwards, the neural network determines the type of the plant. Provided that there is a model for this crop, a user will also get a personal prediction in addition to the general one. “Responding to a user request, the platform displays the three closest classes to the uploaded image. Thus, in most cases, it makes it possible to correctly recognise the disease and get treatment suggestions,” a MLIT JINR leading programmer, a co-author of the research Alexander Uzhinskiy informed.

At present, the pdd.jinr.ru platform includes models for 19 agricultural and ornamental crops: barberry, grape, cherry, blueberry, strawberry, corn, cucumber, pepper, wheat, currant, tomato, cotton, apple, orchid, rose, etc. A general model for all crops recognises 55 different diseases and pests. Experts have collected more than 4,000 images in the database and received more than 40,000 requests from users. Everyone can use the platform interface, starting from agricultural holdings and ending with novice gardeners, who will appreciate such a platform feature as plant treatment suggestions verified by professional agronomists.

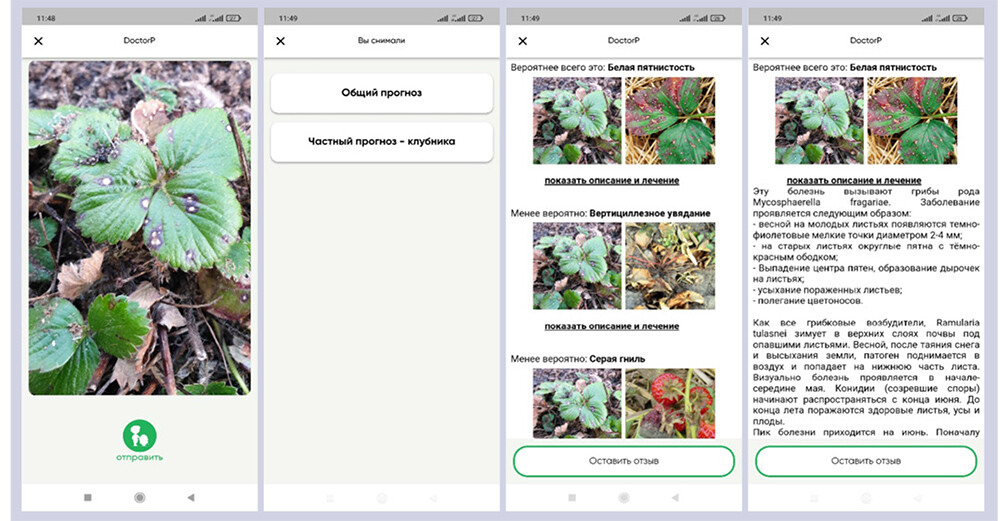

Fig. 2. Examples of DoctorP application interfaces

Fig. 2. Examples of DoctorP application interfaces

“Users should have different tools for interacting with the platform. They can run recognition tasks using a web portal. However, the main user entry point has become the DoctorP mobile application for Android,” Alexander Uzhinskiy explained. Since the launch of the application, more than 10,000 people have used it. Experts are currently developing an application for iOS.

According to Alexander Uzhinsky, to deal with image classification, specialists often use a well-proven convolutional neural network previously trained on a big image dataset of images. Experts replace its last layer, responsible for classification, with a new one that they train on photos from a subject field. Such an approach is called transfer learning. As a rule, specialists use hundreds of images to use this method. At this point, researchers faced the biggest challenge, i.e. to collect enough photos of plants to train a neural network. The open databases available at that time contained synthetic images that significantly differ from real-life images: each leaf was chopped, straightened, placed on a static background and equally illuminated. “Such images are suitable for scientific purposes, and we have obtained a good result. The model reached 99% classification accuracy. However, it made a mistake in 50% of cases when processing real-life photos made by users. We faced such a task as collecting an image database by ourselves,” Alexander Uzhinsky continued. Scientists had to collect as many real-life pictures of plants as possible: with different illumination, position, different shooting scale, etc. As a rule, specialists need hundreds, or better, thousands of images for each specified class to achieve positive results.

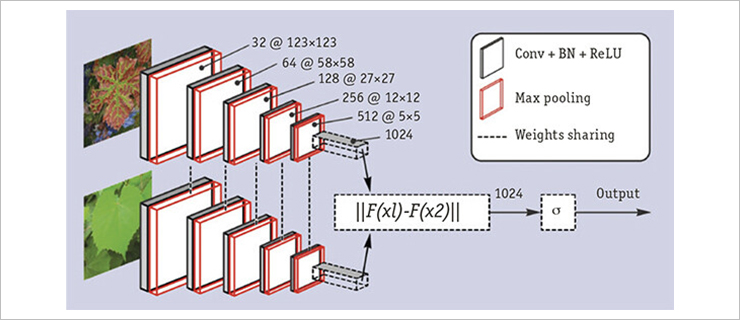

Fig. 3. Siamese neural network architecture used in the early stages of the research

Fig. 3. Siamese neural network architecture used in the early stages of the research

Open sources, such as online communities, forums, provided only 20 – 50 images for each disease class. Thus, developers had to use special methods to get good results using a small training sample. To solve the problem, they used the Siamese (twin) neural network. Although, specialists have not used it for plant disease classification, it distinguished faces well. “This method uses twin networks. Specialists pass a pair of images of the same or different classes to their inputs. As a result of training, the network should learn how to make a long distance between multidimensional vectors of images of different classes in the feature space. Thus, this training approach allowed obtaining 98% accuracy in the plant disease detection,” the scientists commented.

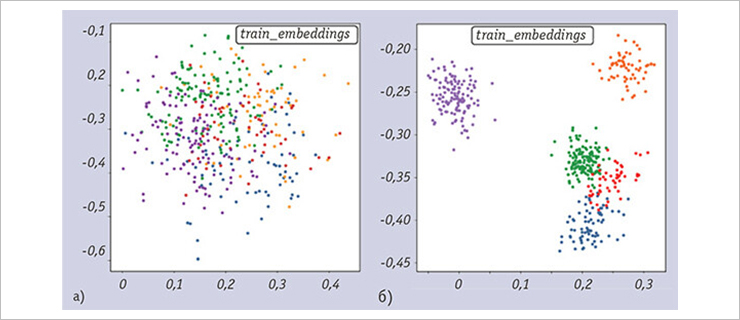

Experts have optimised the platform twice because of the decrease of accuracy after extending the database of image classes. For the first time, in order to improve the performance of predictions, scientists have used a triplet loss function. This approach uses three neural networks. Researchers pass two images of the same class and one image of the different ones to their inputs. The aim of the training is to teach the system to make a minimum distance between feature vectors of images of the same class, and a maximum one between images of the different classes in multidimensional space.

Fig. 4. Representation of the extracted image vectors of different classes in 2D: (a) before training network with the triplet loss function, (b) after training

Fig. 4. Representation of the extracted image vectors of different classes in 2D: (a) before training network with the triplet loss function, (b) after training

The next step in optimising the architecture and training was the use of algorithms for searching optimal augmentation settings (AutoAugment). It is an artificial change of the images on which the neural network is trained, if the number of these images is small (for example, there is a change in the angle of the object, brightness, part of the object is chopped, etc.). In addition, the basic neural network in the platform has become a network specially trained on a variety of plant images. As a result, even if the number of classes is more than 50, the accuracy of models over 95% is maintained in the system.

Authors have developed a software interface that provides a third-party user with an opportunity to use the platform’s resources, for example, via their mobile application. Thus, the Garden Retail Service company (formerly known as Fasko) has built into its HoGa mobile application the ability to detect diseases. Within the framework of the joint project with the world-class scientific centre “Agrotechnologies of the Future” based on Moscow Timiryazev Agricultural Academy, researchers used pdd.jinr.ru neural network models to track the effect of illumination on plant growth. It made it possible to choose optimal schemes for growing crops.

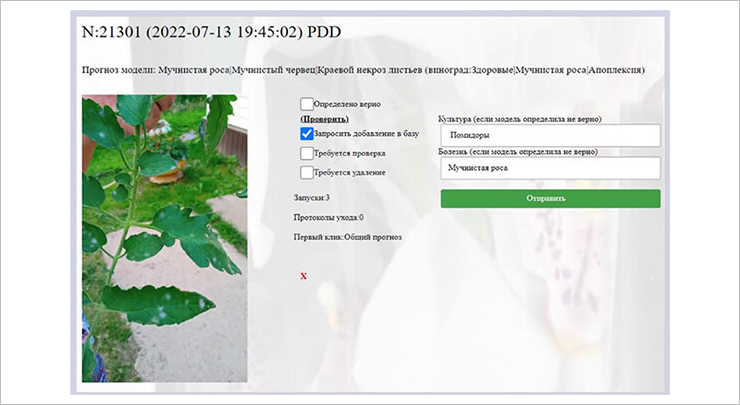

Fig. 5. Interface by expert agronomist

Fig. 5. Interface by expert agronomist

Experts continue developing the platform. They update its database with user images and therefore improve the accuracy of models. In summer, images of agricultural crops such as cucumbers, tomatoes, strawberries, and others are added to the platform, in winter – mostly photos of indoor plants. In the future, specialists will add to the platform video stream processing options, models for detecting the deficit of basic elements (nitrogen, phosphorus, calcium, iron, etc.), as well as tools for forming recommendations on growing and tracking the development of the most popular crops.

The following MLIT JINR scientists were involved in the development of the platform: a chief researcher Gennady Ososkov, a trainee researcher Pavel Goncharov, leading programmers Alexander Uzhinsky and Andrey Nechaevskiy, as well as a specialist with a master’s degree in machine learning Artem Smetanin. An article by Alexander Uzhinsky on the development of the plant detection disease platform was published in the journal “Open Systems. DBMS” No.3, 2022.